For defenders, it would be really amazing if every threat we faced was a single event or action that we could detect – we would know that if x happened, then we need to do y and the threat was detected and prevented. Unfortunately not every threat we face is a single event; it may be the combination of several low priority events that on their own may not raise alarms, but when combined are an indicator of more malicious activity. For instance, you probably receive a lot of identity alerts that are considered low risk, such as users accessing via a new device, or a new location – most are likely benign. If you then detected that same user accessed SharePoint from a location not seen before, that may increase the risk level, and if that user then started downloading a lot of data suddenly that may be really serious.

That pattern follows the MITRE ATT&CK framework where we may see initial access, followed by discovery then exfiltration. Thankfully we can build our own queries to hunt for these kinds of attacks. Microsoft also provide multistage protection via their fusion detections in Microsoft Sentinel.

We can send all kinds of data to Microsoft Sentinel, logs from on premise domain controllers or servers, Azure AD telemetry, logs from our endpoint devices and whatever else you think is valuable. Microsoft Sentinel and the Kusto Query Language provide the ability to look for attacks that may span across different sources. There are several ways to join datasets in KQL, this blog we are going to focus on just the join operator. At its most basic, join allows us to combine data from different tables together based on something that matches between the two tables.

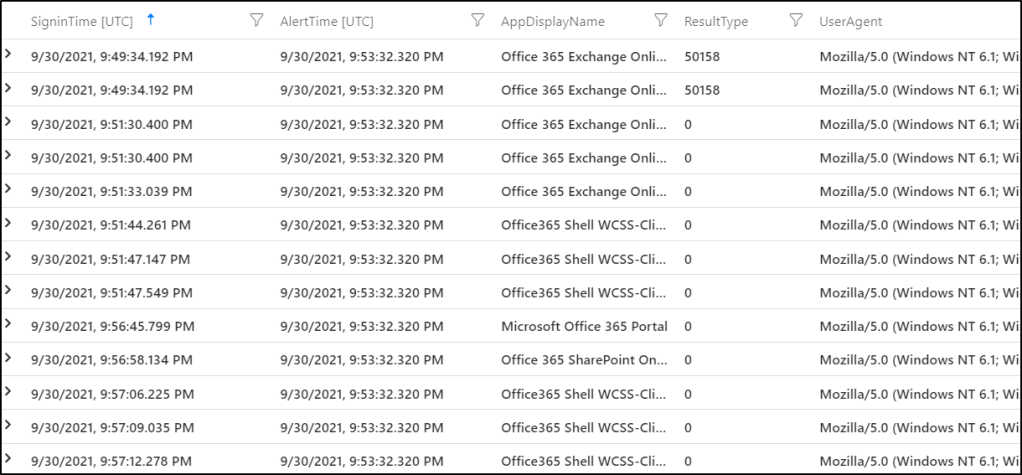

For instance, if we have our Azure AD sign in data, which is sent to the SigninLogs table and our Office 365 audit logs which are sent to the OfficeActivity table, we have various options to where we may find a match between these two tables – such as usernames and IP addresses for example. So we could join the two tables based on a username, and match Azure AD sign in data with Office 365 activity data belonging to the same user. Maybe a user signed into Azure AD from a location previously not seen for them before, so then we would be interested in what actions were taken in Office 365 after that sign in event.

When we join data in Microsoft Sentinel we have a lot of options, to keep things straight forward for this post, we are just going to use ‘inner’ joins, where we look for matches between multiple tables and return the combined data. So using our Azure AD and Office 365 example, after completing an inner join, we would see the data from both tables available to us – such as location, conditional access results or user agent from the Azure AD table and actions such as downloading files from OneDrive or inviting users to Teams, from the Office 365 table. There are other types of joins, referenced in the documentation, but we will explore those in a future post. Learning to join tables was one of the things that confused me the most initially in KQL, but it provides immense value.

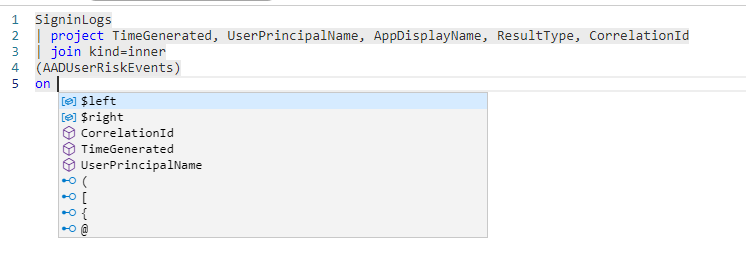

If we start with something simple, we can join our Azure AD sign in logs to our Azure AD Risk Events (held in the AADUserRiskEvents table), if we build a simple query and tell KQL to join the tables together, you will see it automatically tells us where there is a match in data.

The TimeGenerated, CorrelationId and UserPrincipalName fields exist in both tables. If we join on our CorrelationId, we can then see we get options to fill in our query from both tables

Where the same column exists on both sides you will see it automatically renames one, seen with ‘CorrelationId1’. We can then finish our query with data from both tables

SigninLogs

| project TimeGenerated, UserPrincipalName, AppDisplayName, ResultType, CorrelationId

| join kind=inner

(AADUserRiskEvents)

on CorrelationId

| project TimeGenerated, UserPrincipalName, CorrelationId, ResultType, DetectionTimingType, RiskState, RiskLevelWe get the TimeGenerated, UserPrincipalName, ResultType from Azure AD sign in data, and the DetectionTimingType, RiskState and RiskLevel from AADUserRiskEvents, and we use the CorrelationId to join them together.

We can use these basics as a foundation to start adding some more logic to our queries. In this next example we are looking for AADUserRiskEvents, and this time joining to our Azure AD Audit table (where Azure AD changes are tracked) looking for events where the same user who flagged a risk event also changed MFA details within a short time frame.

let starttime = 45d;

let timeframe = 4h;

AADUserRiskEvents

| where TimeGenerated > ago(starttime)

| where RiskDetail != "aiConfirmedSigninSafe"

| project RiskTime=TimeGenerated, UserPrincipalName, RiskEventType, RiskLevel, Source

| join kind=inner (

AuditLogs

| where OperationName in ("User registered security info", "User deleted security info")

| where Result == "success"

| extend UserPrincipalName = tostring(TargetResources[0].userPrincipalName)

| project SecurityInfoTime=TimeGenerated, OperationName, UserPrincipalName, Result, ResultReason)

on UserPrincipalName

| project RiskTime, SecurityInfoTime, UserPrincipalName, RiskEventType, RiskLevel, Source, OperationName, ResultReason

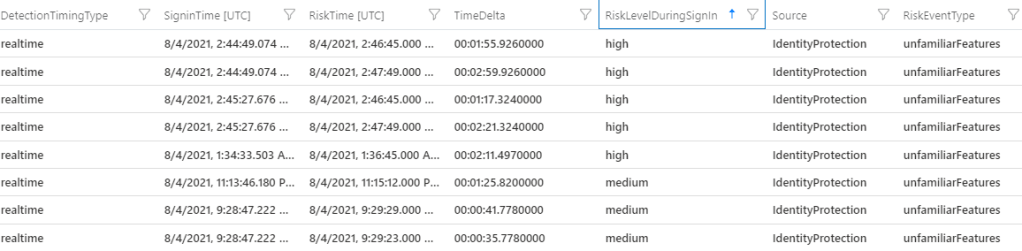

| where (SecurityInfoTime - RiskTime) between (0min .. timeframe)This query is a little more complex but it follows the same pattern. First we set a couple of time variables, we are going to look back through 45 days of data and we want to set a time frame of four hours between our events. If a risk event is triggered initially, but then the MFA event doesn’t occur for two weeks, then it is not as likely to be linked compared to these events happening close together. Next, we look up our AADUserRiskEvents, exclude anything that Microsoft dismiss as safe and then we take the details we want to use in our second query – the UserPrincipalName, RiskEventType, RiskLevel and Source, we also take the TimeGenerated, but to make things more simple to understand we rename it to RiskTime, so that it is easy to distinguish later on.

Then to finish our our query, we again inner join, this time to our AuditLogs table, looking for MFA registration or deletion events, and we join the tables together based on UserPrincipalName, that way we know the same user who flagged the risk event also changed MFA details. We rename the time of the second event to SecurityInfoTime to make our data easy to read. Fnally, to add our time logic, we calculate the time between the two separate events and then alert only when that time is less than four hours.

We can re-use this same pattern across all kinds of data, this query follows basically the exact same format, except we are looking for a risk event followed by access to an Azure management interface. If a user flagged a risk event, then within four hours signed into Azure, we would be alerted.

let starttime = 45d;

let timeframe = 4h;

let applications = dynamic(["Azure Active Directory PowerShell", "Microsoft Azure PowerShell", "Graph Explorer", "ACOM Azure Website"]);

AADUserRiskEvents

| where TimeGenerated > ago(starttime)

| where RiskDetail != "aiConfirmedSigninSafe"

| project RiskTime=TimeGenerated, UserPrincipalName, RiskEventType, RiskLevel, Source

| join kind=inner (

SigninLogs

| where AppDisplayName in (applications)

| where ResultType == "0")

on UserPrincipalName

| project-rename AzureSigninTime=TimeGenerated

| extend TimeDelta = AzureSigninTime - RiskTime

| project RiskTime, AzureSigninTime, TimeDelta, UserPrincipalName, RiskEventType, RiskLevel, Source

| where (AzureSigninTime - RiskTime) between (0min .. timeframe)We can even have KQL calculate the time between two events for you to easily see the time difference between the two. You do this by simply extending a new column and having it calculate it for you (| extend TimeDelta = AzureSigninTime – RiskTime )

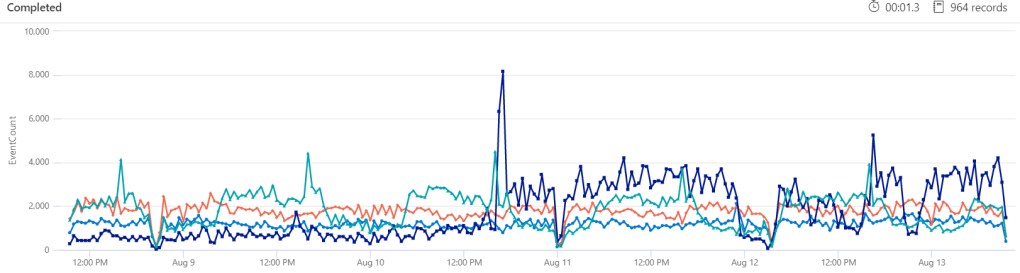

You can extend these queries across any data that makes sense, so we can again take a risk event, but this time join it to our Office 365 activity logs to find a list of files that a user has downloaded shortly after flagging that risk event.

let starttime = 45d;

let timeframe = 4h;

AADUserRiskEvents

| where TimeGenerated > ago(starttime)

| where RiskDetail != "aiConfirmedSigninSafe"

| project RiskTime=TimeGenerated, UserPrincipalName, RiskEventType, RiskLevel, Source

| join kind=inner (

OfficeActivity

| where Operation in ("FileSyncDownloadedFull", "FileDownloaded"))

on $left.UserPrincipalName == $right.UserId

| project DownloadTime=TimeGenerated, OfficeObjectId, RiskTime, UserId

| where (DownloadTime - RiskTime) between (0min .. timeframe)

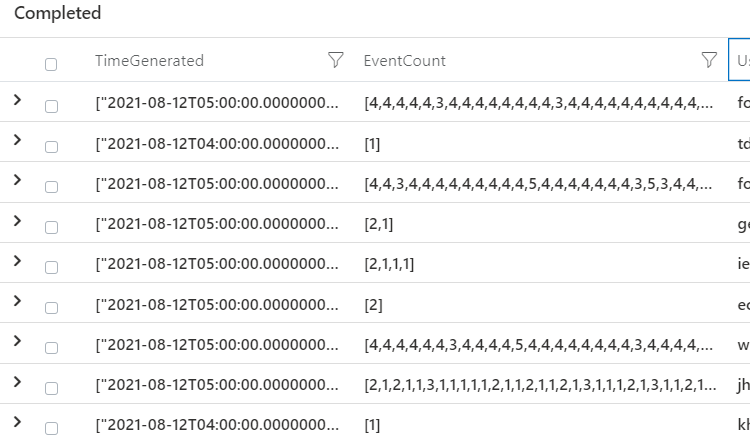

| summarize RiskyDownloads=make_set(OfficeObjectId) by UserId

| where array_length( RiskyDownloads) > 10We use much the same query structure, but there are two things to note here, the AADUserRiskEvents and OfficeActivity store username data in two different columns, so we need to manually tell Microsoft Sentinel how to join, which we do by “on $left.UserPrincipalName == $right.UserId”. We are telling KQL that the UserPrincipalName from our first table (AADUserRiskEvents) is the same as the UserId in our second table (OfficeActivity). Data coming in from different vendors, and even Microsoft themselves, is wildly inconsistent, so you will need to provide the brain power to link them together. In this example, we also summarize the list of downloads the risky user has taken, and only alert when it is greater than 10 unique files.

These kind of multistage queries don’t need to be limited to users or identity type events, you can use the same structure to query device data, or anything else that is relevant to you.

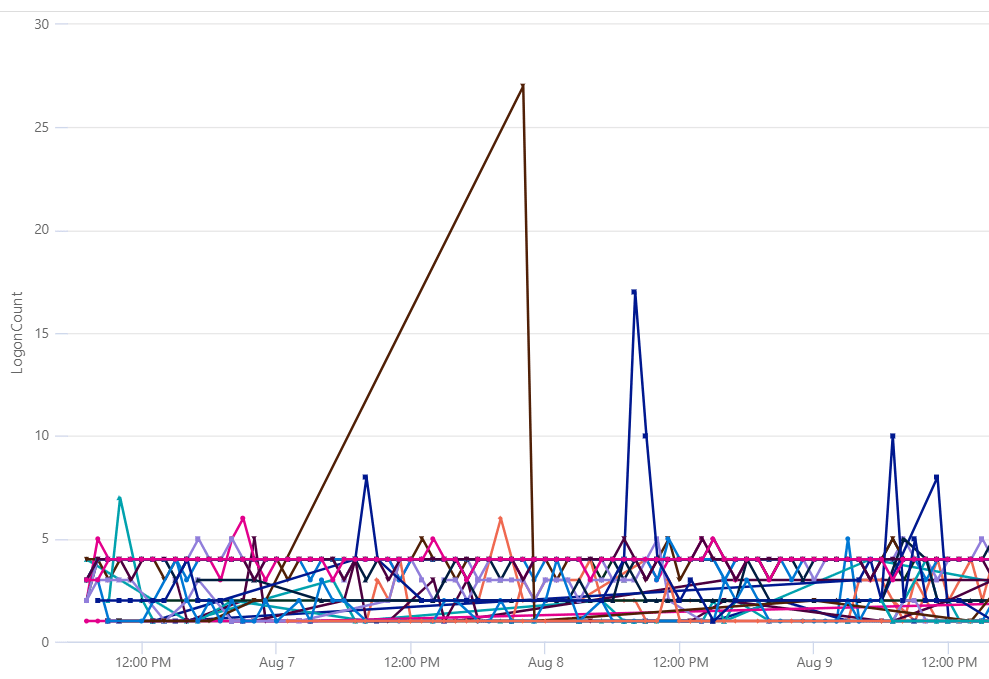

let timeframe = 48h;

SecurityAlert

| where ProviderName == "MDATP"

| project AlertTime=TimeGenerated,DeviceName=CompromisedEntity, AlertName

| join kind=inner (

DeviceLogonEvents

| project TimeGenerated, LogonType, ActionType, InitiatingProcessCommandLine, IsLocalAdmin, AccountName, DeviceName

| where LogonType in ("Interactive","RemoteInteractive")

| where ActionType == "LogonSuccess"

| where InitiatingProcessCommandLine == "lsass.exe"

) on DeviceName

| where (AlertTime - TimeGenerated) between (0min .. timeframe)

| summarize arg_max(TimeGenerated, *) by DeviceName

| project LogonTime=TimeGenerated, AlertTime, AlertName, DeviceName, AccountName, IsLocalAdminIn this last example, we take an alert from Microsoft Defender for Endpoint, then use that first event to circle back to our DeviceLogonEvents which tracks logon event data on Windows devices, from there we can track down who was the most recent user to sign onto that device, and also determine if they are a local administrator.